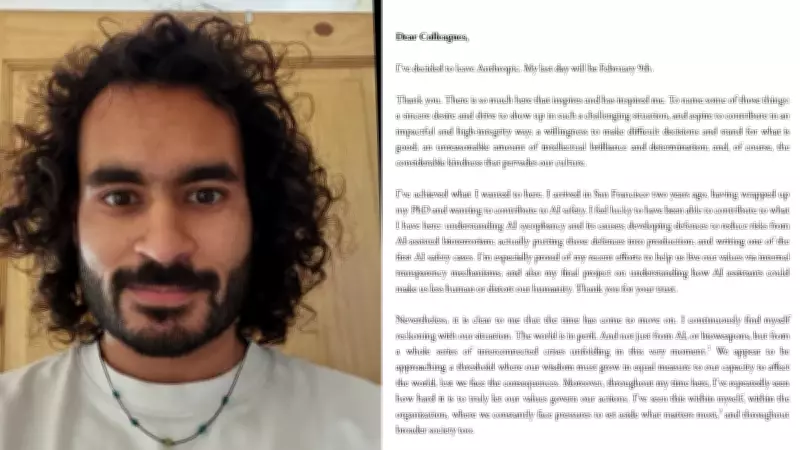

Senior Anthropic Engineer Steps Down, Issues Stark Warning on AI and Global Stability

In a move that has sent ripples through the artificial intelligence community, Mrinank Sharma, a prominent engineer at the leading AI research company Anthropic, has tendered his resignation. Sharma, who held a senior technical role at the firm, publicly declared that the world is in a state of peril, driven by the unchecked advancement of artificial intelligence and a convergence of severe global crises.

A Resignation Rooted in Deep Concern

Sharma's departure from Anthropic, known for its work on AI safety and large language models like Claude, was not a routine career move. In his resignation statement, he articulated a profound sense of alarm regarding the trajectory of technological development and its intersection with pressing worldwide issues. He emphasized that the rapid pace of AI innovation, without adequate safeguards and ethical frameworks, poses an existential threat to societal stability and human well-being.

The engineer pointed to a dangerous synergy between AI capabilities and ongoing global challenges.These include, but are not limited to, geopolitical tensions, climate change, economic inequality, and public health vulnerabilities. Sharma argued that AI systems, if misaligned or deployed irresponsibly, could exacerbate these crises rather than help solve them, leading to unpredictable and potentially catastrophic outcomes.

Implications for the AI Industry and Ethical Discourse

This resignation underscores a significant and growing tension within the technology sector, particularly among AI researchers and developers. It highlights a critical divide between the commercial and competitive drive to advance AI capabilities and the urgent need for rigorous safety protocols, transparency, and ethical governance.

Sharma's public warning adds to a chorus of voices from within the industry calling for greater caution.His decision to leave a prestigious position at a top AI firm to sound this alarm brings renewed attention to the internal debates happening behind closed doors at major tech companies. It raises questions about whether current self-regulation and development practices are sufficient to manage the risks associated with increasingly powerful AI systems.

The key concerns highlighted by Mrinank Sharma include:

- The potential for AI to be used in malicious ways that undermine security and democracy.

- The risk of AI systems making autonomous decisions with harmful real-world consequences.

- The amplification of societal biases and misinformation at a global scale.

- The diversion of resources and focus from solving fundamental human and environmental problems.

Looking Ahead: A Call for Action and Reflection

While Anthropic has not issued a detailed public response to Sharma's specific claims, the company is widely recognized for its research into AI alignment and safety. This incident, however, suggests that even within organizations prioritizing these issues, there are individuals who believe the efforts are not progressing fast or effectively enough to match the speed of technological advancement.

Mrinank Sharma's resignation is more than a personal career change; it is a stark signal to policymakers, industry leaders, and the public. It serves as a powerful reminder that the development of artificial intelligence must be accompanied by robust, inclusive, and forward-thinking global cooperation on ethics, regulation, and crisis management. The path forward requires balancing innovation with profound responsibility to ensure technology serves humanity's best interests and does not lead it into greater peril.